it doesn't happen for ALL logfiles in /hosting/logs/ app123/, only some of them.

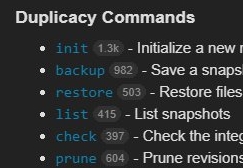

If so, what is/are suggested workaround(s)?Īnd finally. Duplicacy is a new generation cross-platform cloud backup tool based on the idea of Lock-Free Deduplication. Is the crcSalt statement forcing /hosting/logs/ app123/abc.log to look like a different file than /hosting/logs/ myapp/abc.log, causing it to be indexed twice? sudo mv duplicacy /usr/bin/ Initialize the remote storage and repository The duplicacy init command is used to initialize the remote storage and the backup directory. because one replica is a newly created duplicate of the other. chmod 755 duplicacy STEP 5: Move duplicacy to the /usr/bin directory. Use the follow preference (see the Symbolic Links section) to make Unison treat these. But maybe Azure AD Connect wasnt configured with some of the scenarios in mind from the preceding list. One entry for /hosting/logs/ app123/abc.log, and one for /hosting/logs/ myapp/abc.log mv duplicacylinux圆42.7.2 duplicacy STEP 4: Change the file permissions. The ImmutableId attribute, by definition, shouldnt change in the lifetime of the object. NOTE: In the actual nf, there are angle brackets surrounding SOURCE, but adding them in this text input box seems to invoke some markdown directive, so I removed them.įor certain logfiles, we see the same logfile entry twice. There is also a Duplicacy GUI frontend built for Windows and Mac OS X available from. This repository hosts source code, design documents, and binary releases of the command line version of Duplicacy. It is happening in a directory that is pointed to with a symbolic link (i.e., "/hosting/logs/ myapp/" is a symbolic link for "/hosting/logs/ app123/"). Duplicacy is a new generation cross-platform cloud backup tool based on the idea of Lock-Free Deduplication. Either way, this number should line up with the amount of pages that you manually created. Or check out your indexed pages in the Google Search Console. With just a few clicks, you can effortlessly set up backup, copy, check, and prune jobs that will reliably protect your data while making the most efficient use of your storage space. You can do this by searching for site: in Google. Since Duplicati supports S3, B2, GDrive, and many others I backup directly to the cloud, as well as my local storage. Remove the marked, unmarked or all items - Replace the removed files with hard links, symbolic links or aliases - Move or copy the items to a specific. Duplicacy comes with a newly designed web-based GUI that is not only artistically appealing but also functionally powerful. We are seeing duplicate logfile entries in our Search results with certain logfiles. One of the easiest ways to find duplicate content is to take a look at the number of pages from your site that are indexed in Google.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed